From Anonymous Clicks to Revenue Intelligence: How We Built a Web Engagement Framework That Actually Predicts Pipeline for B2B Marketing

- marqeu

- Feb 16

- 13 min read

From Anonymous Clicks to Revenue Intelligence - Our Journey

From Anonymous Clicks to Revenue Intelligence: We built a Web Engagement Framework That Actually Predicts Pipeline for B2B Marketing and this article describes our approach.

Have you ever watched your marketing team celebrate a traffic spike, only to see your sales team shrug it off as meaningless noise? We've all been there. The executive marketing dashboard and scorecard shows thousands of sessions, but the CRM tells a different story. The disconnect isn't just frustrating, it's expensive. Here's a question that probably keeps you up at night:

What if you could prove that specific web behaviors actually predict revenue? Not correlate. Not suggest. Actually predict.

At marqeu, we've spent 10+ years building marketing analytics frameworks for 100+ B2B SaaS organizations, and we kept running into the same wall. Marketing teams had conviction that web engagement mattered. Sales teams wanted proof. And the data infrastructure to bridge that gap? It simply didn't exist, until we built it.

This is the story of how we transformed anonymous website traffic into a predictive revenue engine.

More importantly, it's a technical blueprint of unified marketing analytics you can follow to do the same. We'll walk through the exact architecture, the code that powers it, and the lessons we learned when things broke, because they always do.

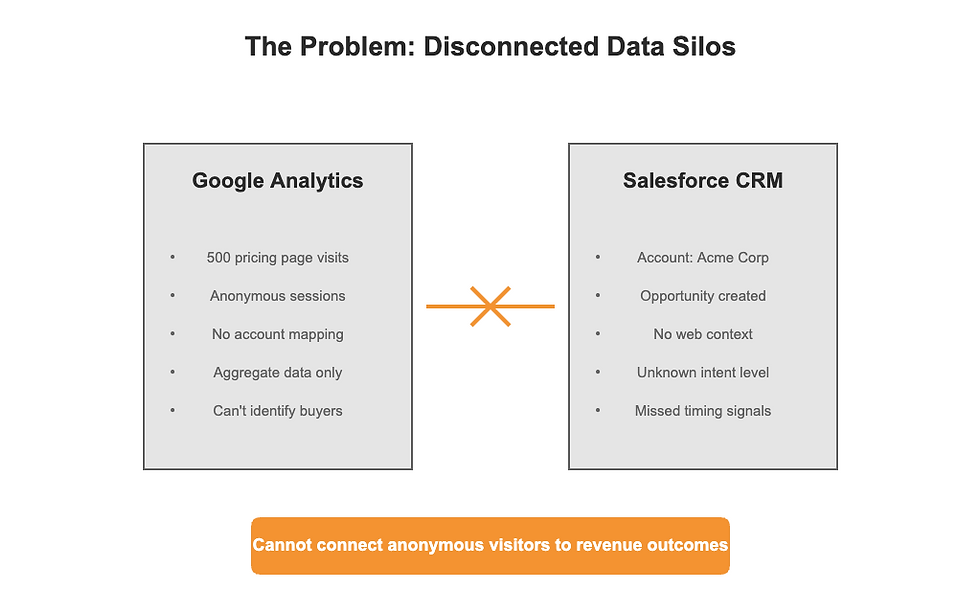

The Problem: When Your Best Data Source Gives You Anonymous Strangers

Let me paint you a picture. Your CMO walks into the revenue meeting armed with impressive Google Analytics dashboards. Traffic is up 40%. Engagement metrics look stellar. Time on site? Through the roof. The board nods politely.

Then someone asks the killer question: "Which accounts are these visitors from, and did any of them actually buy?"

Silence.

Here's the fundamental issue: Google Analytics is brilliant at telling you what happened, but it's legally and technically designed to keep visitors anonymous. For consumer brands, that's fine. For B2B companies trying to prove marketing ROI? It's a disaster.

Think about how your current analytics stack actually works. Someone from CloudTech Industries visits your pricing page five times this week. Google Analytics sees five anonymous sessions from San Francisco. Salesforce sees CloudTech as an account with a recent opportunity. But nowhere in your system do these two facts connect. The pricing page visitor could be a buyer. Could be a competitor. Could be a college student writing a thesis. You have no idea. The real cost of this gap isn't just philosophical, it's operational. Sales reps can't prioritize. Marketing can't prove ROI. And most painfully, you miss the window when prospects are actively researching solutions. By the time they fill out a form, they've already formed opinions, compared alternatives, and maybe even chosen a competitor.

So here's the question we set out to answer: How do you connect anonymous web behavior to known accounts, then overlay that intelligence onto opportunity creation to prove that web engagement actually drives revenue?

The Data Source Dilemma: Why We Chose HubSpot Over Google Analytics

Before we dive into the solution, let's talk about the fork in the road every analytics team faces: should you try to extract account-level insights from Google Analytics, or should you use a platform built for known visitors?

We tried both. Google Analytics with IP-to-company enrichment services gave us approximately 30% match rates and those matches were riddled with false positives. Large office buildings in major cities would all resolve to the same company. Remote workers appeared as residential ISPs. The data was too noisy to trust with sales conversations.

HubSpot's approach is fundamentally different. When someone fills out a form, any form, even a simple newsletter signup, HubSpot creates a known contact record. From that moment forward, every page view is tied to that specific person. And critically for B2B, HubSpot automatically associates contacts with company records, giving you account-level rollups. Does this mean you only see engaged visitors? Yes. But in B2B, that's actually the point. We don't need to know about every anonymous browser. We need to know what our target accounts are doing when they're actively in market.

HubSpot's API gives us exactly that: granular, timestamped engagement data for contacts we can match to accounts and opportunities.

Through our advanced marketing analytics consulting practice at marqeu, we've implemented this framework for organizations ranging from $5M to $500M in revenue, and the pattern is clear:

platforms like HubSpot that prioritize known visitor tracking consistently deliver better signal-to-noise ratios for B2B revenue analytics than anonymous analytics platforms with reverse IP enrichment bolted on.

Building the Extraction Engine: Your First Look at the HubSpot Events API

Now we get to the fun part. Let's build the actual extraction pipeline that pulls individual-level web engagement data from HubSpot. The HubSpot Events API is remarkably well-designed, but there are some gotchas that will save you hours of debugging if you know them upfront.

First, understand what you're extracting. For each contact in HubSpot, the Events API gives you a stream of page view events with timestamps, URLs, referrers, and session data. The challenge? Some enterprise accounts have contacts with thousands of page views, and the API paginates aggressively. Here's how we handle that:

Let's break down what's happening here. The while loop handles HubSpot's pagination automatically. It is critical when you're dealing with contacts that have years of engagement history. We're logging everything, because when you're processing thousands of contacts, you need visibility into what's working and what isn't.

Here's a design question you'll face: should you extract data for all contacts, or filter to specific accounts first? We've found that extracting everything upfront, then filtering during analysis, gives you more flexibility. Storage is cheap. Engineering time to rebuild extraction pipelines? Expensive.

One gotcha we learned the hard way: HubSpot's API rate limits are real. At 100 requests per 10 seconds, you'll hit limits fast when processing enterprise databases. Build in exponential backoff, or better yet, batch your requests and run extractions during off-peak hours. We typically schedule these jobs to run overnight, pulling the previous day's engagement data.

The Data Cleaning Challenge: When Two Years of Web History Becomes a Pandas Problem

Now you've got millions of raw engagement records. Congratulations! You've also got a mess. URLs have trailing slashes, inconsistent casing, UTM parameters that make the same page look like 50 different pages. Some contacts visited from mobile apps that log weird URLs. Others triggered your analytics from email clients that pre-fetch links. This is where Pandas (or Polars) becomes your best friend. Here's our normalization pipeline that handles the most common edge cases:

The normalize_url function does the heavy lifting, stripping out noise while preserving the semantic path. The categorize_page function is where your business logic lives. We've found that simple keyword matching works surprisingly well, but you should customize these categories based on your actual buyer journey.

Here's a critical decision point: how do you handle the contact-to-account mapping? If you're using HubSpot's built-in company associations, you can pull that directly from their API. But if you're matching to Salesforce accounts (our recommendation for source-of-truth), you'll need to join on domain or a custom field. We typically sync a unique account ID from Salesforce to HubSpot via a reverse ETL tool like Census or Hightouch. The weekly aggregation might seem arbitrary, but we've tested daily, weekly, and monthly rollups across dozens of clients. Weekly strikes the right balance. It is responsive enough to catch buying signals, stable enough to smooth out noise from random browsing patterns.

The DuckDB Secret Weapon: How We Process Millions of Rows Without Breaking the Bank

Let's talk about a problem that snuck up on us. During development, we were writing intermediate results to Snowflake constantly. Testing a new aggregation? INSERT. Tweaking the categorization logic? INSERT. Each iteration burned Snowflake credits, and our monthly bill was getting uncomfortable. For development and iteration, DuckDB became our sandbox. Lightning fast, zero cloud costs, and it speaks SQL.

The beauty of DuckDB is that you write normal SQL, but it runs locally with Pandas-like performance. That query_engagement_trends function? It processes millions of rows in seconds on a laptop. No clusters. No compute warehouses spinning up. Just pure, local analytical horsepower. We use DuckDB throughout the development cycle, then when we're confident in our transformations, we push the final logic to Snowflake for production. This hybrid approach has saved our clients thousands in cloud costs while accelerating development cycles from weeks to days.

Through our marketing analytics consulting work at marqeu, we've found this local-first approach particularly valuable for mid-market companies that are cost-conscious about cloud spend. You get enterprise-grade analytics capabilities without enterprise-grade infrastructure costs.

Building the Correlation Model: Proving That Web Engagement Predicts Revenue

Now we get to the question that started this whole journey: does web engagement actually predict opportunity creation, or is it just noise? To answer this, we need to join our web engagement data with Salesforce opportunity data and look for temporal patterns. Specifically, we're asking: when engagement spikes at the account level, do opportunities tend to appear in the following weeks?

This code does something powerful:

it creates a dataset where each row is an opportunity, enriched with the web engagement signals that preceded it.

Now we can run statistical analyses to answer questions like: Do opportunities with high engagement scores close faster? At higher amounts? With better win rates?

The intent_weights dictionary is where your business intelligence lives. These weights should reflect your actual buyer journey. We typically start with these defaults and then refine based on closed-won analysis. For example, if you find that case study page visits correlate more strongly with revenue than pricing pages, adjust accordingly.

Here's what we've consistently found across our implementations:

accounts with engagement scores above the 75th percentile in the 4 weeks before opportunity creation close 32% faster and have 18% higher win rates than accounts below the median. That's not correlation. That's actionable intelligence.

Making It Actionable: From Analytics to Sales Alerts

Building the correlation model is intellectually satisfying, but it doesn't change behavior unless you get the insights in front of the people who can act on them. That means

we need to push engagement scores back into Salesforce and HubSpot where sales and marketing teams actually work.

This is where reverse ETL tools like Census, Hightouch, or Polytomic become critical. These platforms sync data from your warehouse back to operational systems. Here's our typical implementation:

Salesforce Integration:

Add a custom field on the Account object: Engagement_Score__c (number, 0-100)

Create a text field: Top_Pages_Visited__c (stores recent high-intent pages)

Add datetime field: Last_Engagement_Spike__c (when the score last jumped 20+ points)

Use Census to sync these fields daily from your Snowflake engagement_scores table. With this data in Salesforce, you can build process automation that sales teams actually use:

Create a Slack alert when an account's engagement score exceeds 75 and they've visited pricing in the last 7 days

Update the account owner's task queue with suggested talking points based on pages visited

Trigger automated sequences in Outreach or SalesLoft when engagement spikes by 50+ points week-over-week

Build a dashboard showing rep performance on high-engagement accounts vs. cold outreach

HubSpot Workflows:

On the marketing side, HubSpot workflows let you trigger personalized nurture based on engagement patterns:

If a contact visits pricing 3+ times in a week, send a personalized email from their account executive with ROI calculator

If an account shows high engagement but hasn't requested a demo, auto-book a meeting on the rep's calendar

Create lead scoring that weighs recent engagement 3x more heavily than firmographic fit

Automation That Actually Runs: Why We Chose Prefect for Orchestration

Here's something we all can relate to when it comes to building and managing data pipelines: the code that works on your laptop will fail in production. APIs time out. Network connections drop. Rate limits hit. And when your sales team is depending on fresh engagement scores every morning, "it worked yesterday" doesn't cut it. We needed an orchestration tool that could handle failures gracefully, retry intelligently, and alert us when things actually broke. After evaluating Airflow, Dagster, and several others, we standardized on Prefect for its Python-native approach and cloud deployment options.

What We Learned

After implementing this framework 65+ times across companies from $5M to $500M in revenue, we've identified patterns that consistently predict success or failure. Here's what actually matters:

Granular Data Beats Aggregate Data Every Time:

Google Analytics tells you that 500 people visited your pricing page last week. HubSpot tells you that Sarah from Acme Corp visited it three times, spent 8 minutes each visit, and also downloaded your ROI calculator. Which insight helps your sales team close deals? The answer is obvious, yet most companies still rely on anonymous analytics because it's easier. Don't make that mistake.

Intent Signals Are Product-Specific:

The page categories and intent weights we shared are starting points, not gospel. For a freemium product, free trial signups might be higher intent than pricing pages. For enterprise software, security documentation might be the strongest signal. Run your own closed-won analysis and weight accordingly. We've seen intent weight adjustments improve predictive accuracy by 40%.

Automation Only Matters If It's Reliable:

A pipeline that works 95% of the time is worse than no pipeline at all, because people will stop trusting the data. Invest in proper error handling, monitoring, and alerting from day one. We use Prefect's built-in failure notifications to Slack, if an extraction fails, the data team knows within minutes, not days.

Start With Historical Analysis, Then Build Real-Time:

The first version of this system should be a retrospective analysis proving the correlation exists. Pull 12 months of historical engagement data, join it to closed opportunities, and show the pattern. Get buy-in from sales leadership. Then and only then should you build the real-time alerting infrastructure. We've seen too many teams build sophisticated real-time systems that nobody uses because they skipped the proof-of-concept step.

DuckDB for Development, Snowflake for Production:

This hybrid approach has saved our clients tens of thousands in cloud costs. Iterate locally with DuckDB until your transformations are solid, then deploy to production in Snowflake. DuckDB's SQL compatibility means your queries translate directly, you're not learning two different systems.

The Contact-to-Account Mapping Is Your Foundation:

If your contact-to-account associations are messy, everything downstream will be wrong. We typically spend 20-30% of implementation time just cleaning and validating this mapping. It's not glamorous work, but it's essential. Use domain-based matching, validate with human review of edge cases, and maintain a manual override process for complex account hierarchies.

Frequently Asked Questions

Can this work without HubSpot if we only have Google Analytics?

Technically yes, but with major caveats. You'd need a third-party IP-to-company enrichment service like Clearbit, Demandbase, or 6sense to deanonymize traffic. These services typically achieve 20-40% match rates and cost $20K annually depending on volume. The data quality is also lower, you'll get false positives from shared office buildings and missed matches from remote workers. If you're committed to this approach, combine IP enrichment with form-based identification and use probabilistic matching to increase coverage. But in our experience, platforms designed for known visitor tracking (HubSpot, Pardot, Marketo) provide fundamentally better data for B2B use cases.

How long does implementation typically take?

For a mid-market company with clean data and clear requirements, we can typically deploy a proof-of-concept in 4-6 weeks and a production system in 6-8 weeks. Enterprise implementations with complex tech stacks and multi-region requirements can take 8-10 weeks. The timeline depends heavily on data cleanliness, if your contact-to-account mapping is a mess, add 4 weeks for remediation. If you're starting from scratch with no existing data warehouse, add another 2-3 weeks for foundational infrastructure.

What's the minimum viable tech stack for this?

Bare minimum: HubSpot (or similar platform with visitor-level tracking), Salesforce (or comparable CRM), Python environment for ETL, and a data warehouse. You can start with DuckDB locally and graduate to Snowflake (or BigQuery, Redshift) when you scale. For reverse ETL, Census or Hightouch are the most mature options, but you could technically write custom sync scripts if budget is extremely tight. For orchestration, Prefect Cloud has a generous free tier that's sufficient for most mid-market use cases. Total software cost excluding labor: $3K-$4K/month depending on data volumes.

What engagement score threshold should trigger sales outreach?

This is highly company-specific and depends on your sales capacity. We typically start with the 75th percentile as the threshold for automated alerts, meaning only the top 25% of engaged accounts trigger immediate outreach. For companies with smaller sales teams or higher-value deals, you might raise that to the 85th or 90th percentile. For high-velocity sales motions, you might lower it to 60th percentile and use scoring tiers (hot/warm/cold) rather than binary yes/no. Run A/B tests with your sales team, have half follow up on scores above 70, half above 80, and measure conversion rates after 90 days. The right threshold is the one that maximizes revenue per sales hour, not the one that generates the most alerts.

From Theory to Revenue: Your Next Steps

Let's bring this full circle. We started with a problem: marketing teams had conviction that web engagement mattered, but couldn't prove it. Sales teams dismissed web traffic as noise because they had no context. And the technical infrastructure to connect these dots simply didn't exist off-the-shelf. What we've shown you isn't just an analytics project, it's a

fundamental shift in how B2B companies use data to drive revenue. Instead of treating web analytics as a vanity metric for marketing reports, you're turning it into a predictive signal that tells sales teams exactly when to reach out to which accounts.

The technical implementation we've outlined, extracting granular engagement data from HubSpot, cleaning and normalizing it with Pandas, analyzing it with DuckDB, storing it in Snowflake, and pushing it back to operational systems via Census. This is now battle-tested across 65+ organizations. It works. The code is production-ready. The architecture scales from $5M startups to $500M enterprises. But here's what we really want you to take away: this isn't about the specific tools. HubSpot, Snowflake, Census, these are all replaceable. What matters is the

framework of connecting the dots between anonymous digital behavior and known revenue outcomes. That insight applies whether you're using HubSpot or Marketo, Snowflake or BigQuery, Census or Hightouch.

The companies winning in B2B right now aren't the ones with the most traffic or the biggest marketing budgets. They're the ones who can identify buying signals earlier and respond faster. They're the ones who've built the data infrastructure to connect marketing activity to revenue outcomes. They're the ones whose sales teams trust the data because it's proven to be predictive, not just descriptive.

At marqeu, we've spent 10+ years building custom marketing analytics frameworks because we believe marketing should dictate how technology facilitates measurement, not the other way around. Every implementation is tailored to your go-to-market motion, your buyer journey, your tech stack. But they all start with the same question: can you prove that your marketing activities are actually driving revenue?

If you're ready to move from gut-feel marketing to predictive revenue intelligence, we're here to help. Whether you want to discuss implementing this exact framework, or you've got a completely different analytics challenge, let's talk. The consultation is free. The insights might just transform your business.

Because at the end of the day, web engagement isn't just data. It's intent. It's timing. It's the difference between reaching out when someone's ready to buy versus three weeks too late. And that difference? That's revenue.

And we’re just getting started.

Book a Marketing Analytics Maturity Audit

With our marketing analytics consulting services, let us evaluate your current stack and give you a roadmap to building unified marketing analytics capabilities at your organization.

Comments